A Story About Failure, Fragility, and the Origins of Cybersecurity Thinking

It begins, as many defining moments in computing do, not with a breakthrough; but with a disruption so small, so mundane, that it might have gone unnoticed in any other era, in any other machine, under any other set of assumptions. Nevertheless, it offered us the first computer bug and certain cybersecurity lessons.

An Ordinary Evening, An Extraordinary Failure

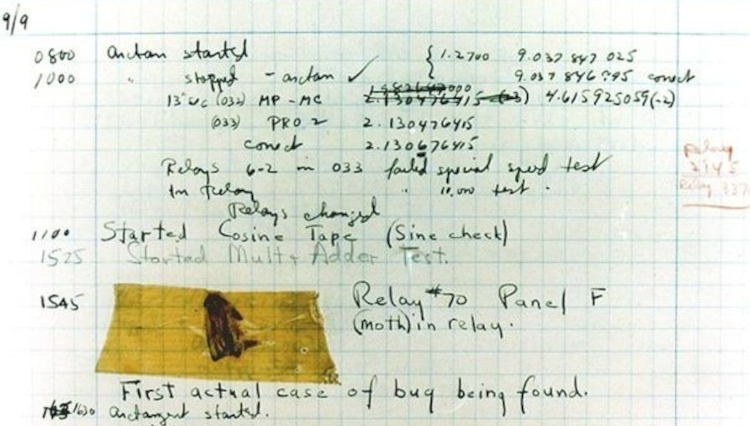

On September 9, 1947, inside Harvard’s Computation Laboratory, engineers stood before the Harvard Mark II, a machine of relays and motion, vast enough to exceed any single operator’s full understanding, and sensed, with practiced intuition, that something was wrong. Not catastrophically, not visibly, but subtly and persistently, as though the machine had drifted out of alignment with its intended logic.

There were no dashboards, no alerts. Only anomalous behavior and the growing realization that the system could no longer be trusted.

Opening the Machine

So they did what engineers have always done. They looked.

Panels came off, relays were inspected, circuits traced physically rather than abstractly, until the cause revealed itself. Not a design flaw or miscalculation, but a moth lodged between relay contacts, interrupting current with its mere presence.

It was almost absurd in its simplicity. The most advanced machine of its time halted by something so fragile. They removed it, restored operation, and then, crucially, documented it, taping the moth into a logbook with the understated note: “First actual case of bug being found.”

From Curiosity to Discipline

The significance of that moment lies not in the insect, but in the decision to record it, to transform failure into knowledge.

In doing so, those engineers established something foundational: that faults are not just inconveniences, but artifacts to be studied. The term “bug” may have existed before, but here it became empirical, observable, and shareable. A precursor to the structured practices that now define engineering and cybersecurity alike.

When Bugs Became Attack Surfaces

Over time, the nature of the bug evolved. What was once a physical obstruction became a logical flaw; subtle, invisible, and increasingly consequential. And unlike the moth, modern bugs do not remain passive.

They are discovered, cataloged, and exploited. A buffer overflow becomes a foothold. A race condition becomes a privilege escalation path. A misconfiguration becomes exposure at scale.

The shift is profound: bugs are no longer just failures. They are opportunities, often for someone else.

Debugging Versus Defending

The engineers of 1947 were debugging, assuming benign failure. Modern practitioners operate under a different premise. That failure may be adversarial.

This shift from correction to defense, defines cybersecurity. The act of tracing a fault remains, but the context has changed. We are no longer simply restoring systems; we are protecting them against entities that actively seek out their weaknesses.

The Persistence of Complexity

It is tempting to imagine early systems as simpler, but the Mark II was already complex enough that its failure was not immediately obvious, complex enough that a single unforeseen interaction could halt it entirely.

Today’s systems are not different in kind, only in scale and abstraction. A moth stopped a relay. A misconfigured policy can now expose an enterprise.

The principle holds. Systems fail at the edges of what we understand.

The Adversarial Reality

The engineers could not have anticipated the moth. But their response created a framework for dealing with the unexpected.

Today, the unexpected is constant. Zero-days emerge without warning, threat actors probe continuously, and the most dangerous vulnerabilities are often the ones discovered first by adversaries. The question is no longer whether bugs exist, but who finds them first.

From Logbooks to Threat Intelligence

That preserved moth represents more than a curiosity. It marks the first recorded incident.

Where engineers once taped evidence into logbooks, we now assign identifiers, track vulnerabilities globally, and share intelligence across ecosystems. The tools have evolved, but the core act remains unchanged. We observe, we analyze, we learn.

The Enduring Lesson

The first computer bug matters not because of what it was, but because of what it revealed: that even the most advanced systems are fragile, that failure is inevitable, and that understanding those failures is the only path to resilience.

Because in every system, no matter how advanced, there is always another bug waiting. And in today’s world, the defining question is simple. Who will find it first?